A Quantitative Study on the Evolution of Big Data (Supply Chain) Concepts

Big data offers new opportunities for the corporation to listen, test and learn, and respond faster.

The term big data is moving up the charts as a “hot topic.”

Last year we published our second quantitative study on the evolution of big data concepts, it gave us the opportunity to talk to supply chain leaders on the evolution of technologies and the use of analytics in the Race for Supply Chain 2020.

We wanted to understand where companies are at in the adoption of big data concepts and we had a good time writing the report on the research.

The study was completed by over 120 respondents in the period of June-July, 2013 and the complete study can be accessed here.

What Did We Learn?

We believe that the adoption of new concepts for big data is a step change for supply chain teams. It is not about force-fitting new forms of data into applications based on relational databases. It cannot be treated as an evolution.

It requires change management. It is about small and iterative projects using new forms of analytics. The projects have to be based on a business problem and the focus needs to be on continuous learning.

This is quite different from the traditional waterfall project approach of mapping “as is” and ”to be” states and managing a large project against a goal. Companies have to be open to the outcome and invest in innovation through analytics.

To drive success companies have to sidestep the hype. While the powerpoints on big data concepts abound, very few technology companies and consulting partners have built solutions to harness this opportunity, and even fewer supply chain leaders are ready to have the discussion.

We find the work by Aster Data (now a division of Teradata) and the work by IBM and Enterra Solutions on cognitive learning engines to be promising.

We are also encouraged by SAS’s work on unstructured text mining, Bazaarvoice on the translaltion of blog and sentiment data, and the work by APT on test-and-learn strategies is exciting.

We are also encouraged by the work on cold chain and serialization by a number of consultants working on sensor data and counterfeiting.

The Findings

In the study, big data was defined as volume that is at least a petabyte, working with a variety of data that goes beyond traditional structured data, and building processes that are based on an increased velocity of data that is associated with real-time flows. In the results, we see several trends:

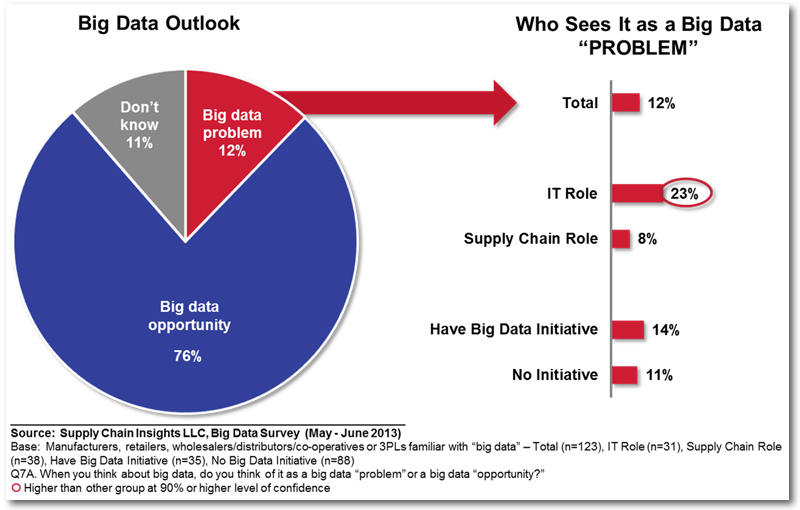

- The biggest opportunity is not with the volume or velocity of data. Instead, it is with the management of opportunity associated with new forms of data. In the study, 76% of companies see big data as an opportunity and 12% see it as a big data problem.

- Databases are growing, but they can be managed. 15% of companies have a database today that is at least at a petabyte. The largest databases are not Enterprise Resource Planning (ERP). Instead they are in the areas of product management or channel data.

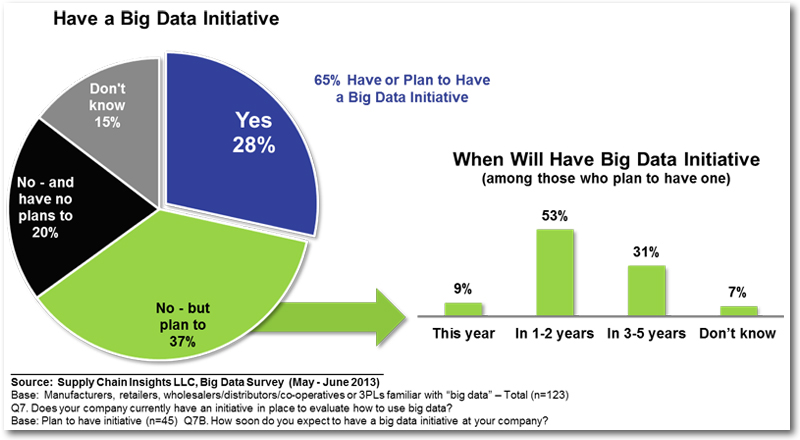

- The work is starting: 28% of companies have a Big Data Initiative today with 37% planning to implement a big data team.

- Companies that have worked on a data-driven culture have a leg-up. Organizations that have active teams on Master Data Management are more likely to have a big data cross-functional team. 54% of companies with Big Data initiatives believe that big data techniques help with MDM

About the Author

Lora Cecere is the Founder and CEO of Supply Chain Insights , the research firm that’s paving new directions in building thought-leading supply chain research. She is also the author of the enterprise software blog Supply Chain Shaman. The blog focuses on the use of enterprise applications to drive supply chain excellence. Her book, Bricks Matter, published in December of 2012.